Resume tips

How to explain project impact with metrics

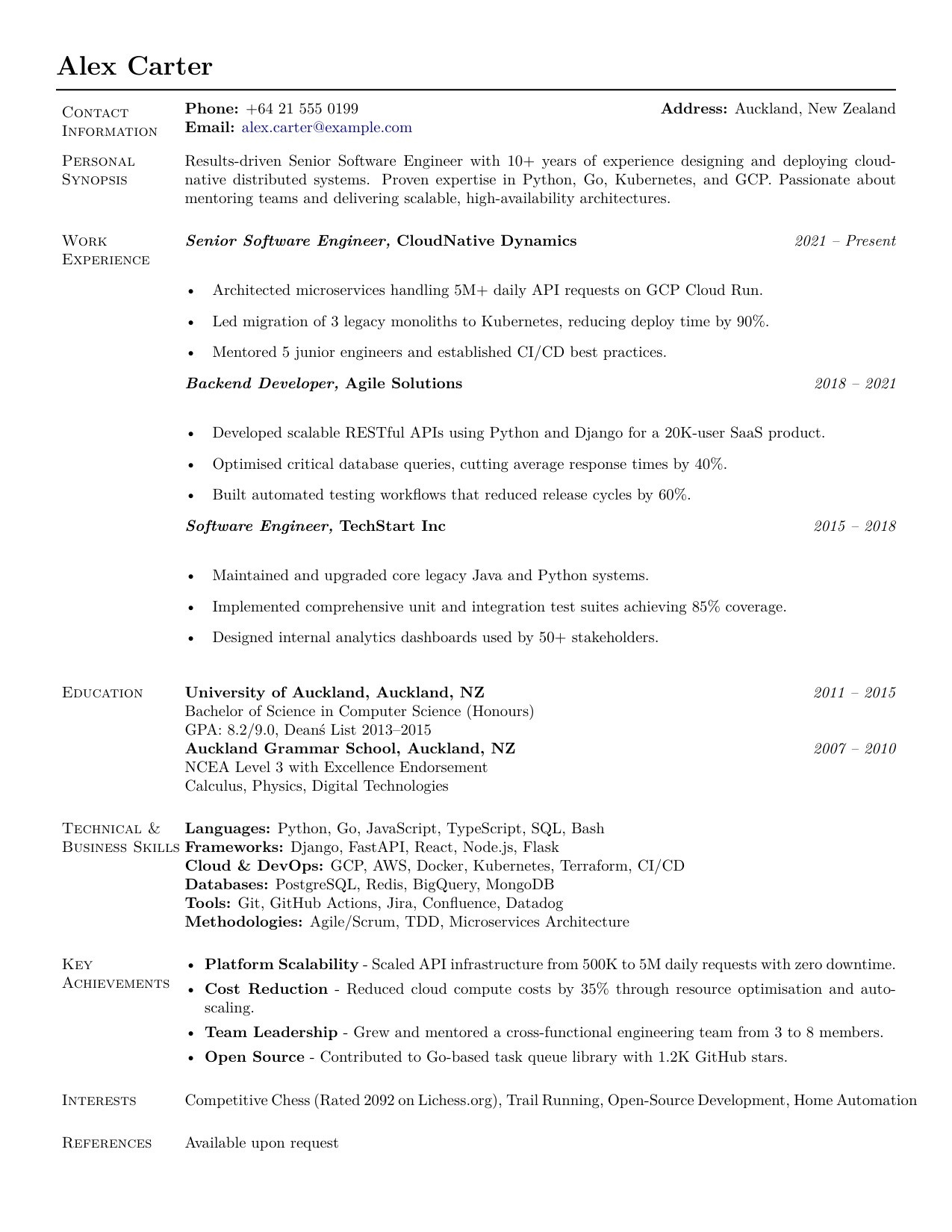

Metrics make resume impact easier to compare, but only when they are honest, specific, and tied to the work you actually did. The best numbers explain change, scale, quality, risk, speed, or value.

Impact metrics

Use numbers to clarify proof, not to make the bullet louder.

The advice to quantify resume bullets is useful, but easy to misuse. Numbers can make a project clearer, faster to scan, and easier to compare. They can also make a resume feel fake if they are stretched, unexplained, or bolted onto work that was never measured.

Career-center guidance is more balanced than the internet version. USFCA frames accomplishment bullets as action, object, context, and results. Yale recommends showing the action, project, and result, with quantification where possible. UC Davis and Princeton both emphasize context and outcomes, not numbers for their own sake. The practical lesson is simple: use metrics when they clarify the truth, and use concrete scope when exact numbers are not available.

A metric is useful only if you can explain where it came from and why it belongs in the story.

Metric map

The five metric types that make resume bullets clearer

Different roles create different kinds of impact. Pick the measurement that helps the reader understand the work, instead of forcing every bullet into revenue or percentage language.

Speed

Cycle time, response time, deployment time, onboarding time, approval time, or manual hours saved.

Quality

Defects reduced, accuracy improved, audit issues closed, error rates lowered, or rework avoided.

Scale

Users, accounts, tickets, patients, students, transactions, data volume, regions, or team size.

Value

Revenue influenced, cost avoided, budget managed, retention improved, or productivity gained.

Risk

Incidents prevented, compliance gaps resolved, downtime reduced, security exposure lowered, or backlog cleared.

Adoption

Feature usage, customer activation, stakeholder participation, training completion, or process uptake.

Choose the right kind of metric

Start with the question the hiring team cares about: what improved because of the work? For a software engineer, it might be latency, uptime, release confidence, developer time, test coverage, or customer adoption. For operations, it might be throughput, cost variance, process time, error rate, or stakeholder visibility.

The best metric is not always the biggest number. It is the number that explains the project. A small metric tied to the real problem is stronger than a dramatic percentage that no one can trust.

- Speed: reduced report prep from 6 hours to 90 minutes.

- Quality: cut duplicate case entries by 28% after redesigning intake checks.

- Scale: supported rollout across 14 teams, 3 regions, or 40K monthly users.

- Risk: closed 31 audit findings before the renewal deadline.

Add a baseline where possible

A number without a baseline can feel impressive but vague. 'Reduced processing time by 30%' is better than 'improved processing time', but 'reduced processing time from 10 days to 7 days' is clearer because the reader can understand the real change.

Baselines can be before-and-after values, previous-year comparisons, team averages, SLA targets, budget limits, sprint goals, or historical volume. If the baseline is confidential, you can still show the direction and scope without exposing sensitive details.

- Before and after: from 12 manual steps to 4 automated checks.

- Frequency: from weekly reporting delays to same-day reporting.

- Target: exceeded quarterly renewal target by 11%.

- Comparison: reduced escalations below the prior six-month average.

Use scope when exact numbers are unavailable

Not every role gives you perfect dashboards. That does not mean the bullet has to be empty. Scope can show the size of the work: number of stakeholders, duration, team size, systems touched, customers supported, tickets handled, training sessions delivered, data sets cleaned, or markets covered.

Scope is also safer than inventing a fake outcome. If you cannot prove that a feature increased conversion by 15%, say what you can prove: what you built, who used it, what workflow it supported, and what problem it was designed to solve.

- Supported 9 account managers during a CRM migration across 1,800 active customer records.

- Built Python validation scripts for 12 recurring data files used in monthly finance reporting.

- Coordinated testing with product, design, and support before launch to 3 customer segments.

Match metrics to the role

A metric is only persuasive when it matches the role's definition of success. A backend platform role may care about reliability, latency, incident reduction, deployment frequency, or developer productivity. A customer-success role may care about adoption, renewal, onboarding time, expansion, or case resolution. A finance role may care about close time, variance, audit readiness, and forecast accuracy.

Before rewriting bullets, mark the outcomes repeated in the job description. Then choose metrics that speak to those outcomes. This keeps the resume tailored without turning it into a keyword exercise.

- Software engineering: latency, uptime, build time, defect rate, release frequency, test coverage, data volume.

- Operations: cycle time, throughput, inventory accuracy, cost variance, quality defects, handoff delays.

- Sales or customer success: pipeline, quota, win rate, renewal rate, adoption, response time, account growth.

- Healthcare or education: patient volume, documentation accuracy, caseload, learning progress, training completion.

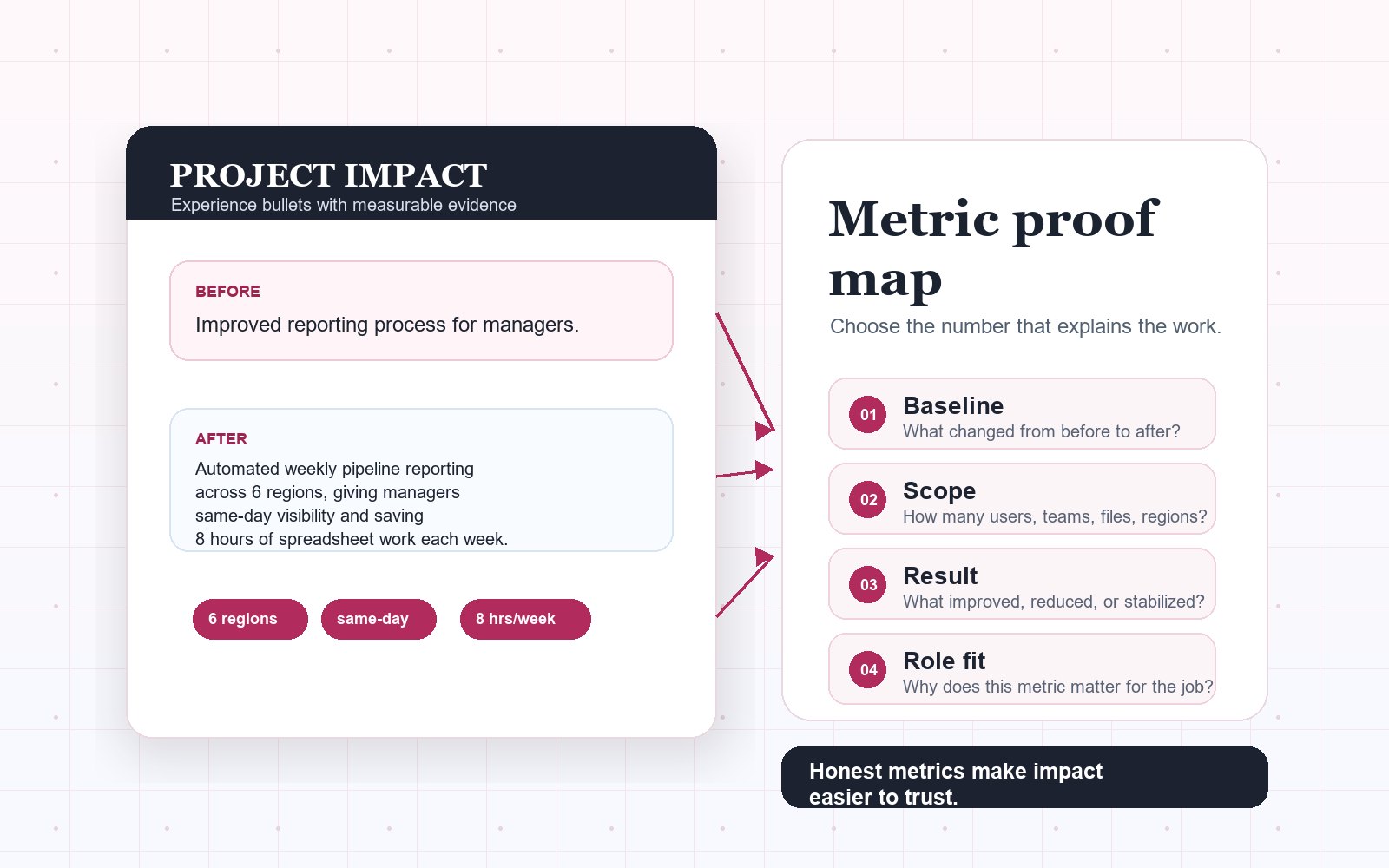

Turn responsibilities into measurable evidence

A responsibility tells the reader what the job involved. An impact bullet shows what changed because you did the work. Use a simple pattern: action, context, result. If a metric is available, put it near the result. If a metric is not available, put concrete scope near the context.

This works across seniority levels. Early-career candidates can use projects, coursework, internships, volunteer work, and support volume. Senior candidates should show bigger scope: architecture decisions, team leverage, system reliability, business outcomes, cost control, mentorship, or cross-functional execution.

- Before: Responsible for weekly reporting.

- After: Automated weekly sales reporting across 6 regions, reducing manual spreadsheet work by 8 hours per week.

- Before: Helped improve onboarding.

- After: Rebuilt onboarding checklist used by 42 new hires, cutting missed setup steps during the first week.

Keep the number defensible

A resume is not the place to guess wildly. If you use a metric, be ready to explain the source: dashboard, ticket system, financial report, survey, user analytics, SLA report, before-and-after sample, manager feedback, or your own project notes. If the number is an estimate, make it conservative and avoid false precision.

Do not use confidential numbers, private customer information, or internal data that would violate policy. You can usually reframe sensitive impact as ranges, percentages, relative improvements, or scope without exposing restricted details.

- Use rounded numbers when exact precision is not important: about 30 users, 12 teams, 4 hours per week.

- Avoid claims like improved UX by 40% unless you can explain exactly how that was measured.

- When in doubt, choose conservative scope over an exciting but fragile metric.

Example rewrite

A vague project bullet turned into measurable proof

The target role values automation, stakeholder visibility, and operational efficiency.

Before

Worked on reporting automation and improved visibility for managers.

After

Automated weekly pipeline reporting across 6 sales regions, giving managers same-day visibility into stalled deals and reducing manual spreadsheet work by 8 hours per week.

It names the action and the system changed.

It adds scope: 6 regions and weekly cadence.

It explains the result in human terms: same-day visibility and time saved.

The honest metric checklist

What changed because of this work?

Can you show a before-and-after baseline, target, volume, frequency, or scope?

Does the metric match the role's definition of success?

Can you explain where the number came from in an interview?

Is the number safe to share without exposing confidential information?

Would the bullet still make sense if the number were removed?

Common metric mistakes to avoid

Adding a percentage because the bullet feels weak, not because the result was measured.

Using fake precision, such as 37.4%, when the actual source was only an estimate.

Claiming team impact as if it were your individual contribution.

Choosing revenue or growth metrics when the role mainly values reliability, quality, risk, or process control.

Letting every bullet become a number-heavy sentence that is hard to read.